RESEARCHES

Smart Vision & Robotic Sensing

Professor, Robotics Laboratory

Smart Innovation Program, Graduate School of Advanced Science and Engineering

Hiroshima University

Smart Innovation Program, Graduate School of Advanced Science and Engineering

Hiroshima University

Idaku ISHII

- >> Research Contents

- In order to establish high-speed robot senses that are much faster than human senses, we are conducting research and development of information systems and devices that can achieve real-time image processing at 1000 frames/s or greater. As well as integrated algorithms to accelerate sensor information processing, we are also studying new sensing methodologies based on vibration and flow dynamics; they are too fast for humans to sense.

Multi-Object Feature Extraction Based on Cell-Based Labeling

|

In this study, we introduce a cell-based labeling method that can accelerate multi-object recognition to extract locations and features of multiple objects in an image, and multi-object feature extraction is performed at 2000 fps for 512×512 images by implementing a cell-based labeling algorithm as hardware logic on a high-speed vision platform.

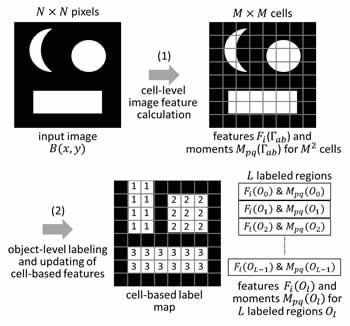

A concept of cell-based labeling can reduce the number of scanned pixels for labeling and memory size to store label equivalences without accuracy degradation in space resolution by dividing an image into subimage regions as cells, assuming the additivity in image feature calculation. Our cell-based labeling algorithm has two sub-processes: (1) cell-level image feature calculation for divided cells, and (2) object-level labeling and updating of cell-based image features. The upper figure shows the concept of cell-based labeling algorithm for moment feature calculation.

To accelerate calculation of additive image features such as moment features, higher-order local autocorrelation (HLAC) features, and color histograms, we have already implemented several hardware logics of cell-based labeling on an FPGA-based high-speed vision platform, IDP Express. In the implementation, additive image features of 1024 objects in an image can be simultaneously extracted for multi-object recognition by dividing the image into 8×8 cells concurrently with calculation of the 0th and 1st order moments to obtain the sizes and locations of multiple objects.

Their performances that can simultaneously execute multi-object feature extraction at 2000 fps for 512×512 images, were already verified by showing several experimental results for high-speed moving objects.

Cell-based labeling for moment features

|

WMV movie(2.3M) letters rotating at 16rps |

|

WMV movie(2.2M) trumpsuits projected at 2000fps |

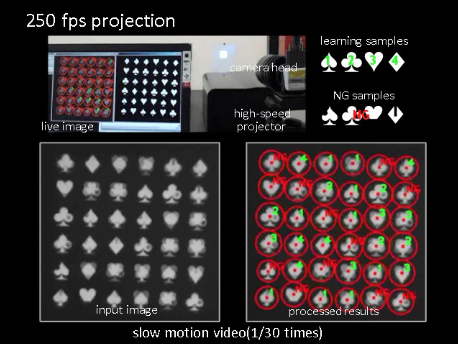

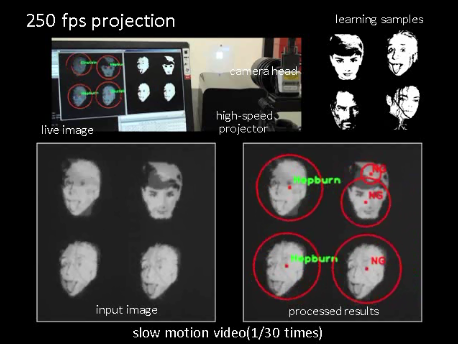

Cell-based labeling for 25 HLACs + moment features

|

WMV movie(1.9M) learned trumpsuits projected at 250fps |

|

WMV movie(1.0M) face patterns projected at 250fps |

Cell-based labeling for 16-color-bin histograms + moment features

|

WMV movie(2.1M) 16 color-patterned objects rotating at 16rps |

|

MPG movie(0.9M) up-and-down human hand movement with color-patterned bottles |

Reference

- Qingyi Gu, Takeshi Takaki, and Idaku Ishii : Fast FPGA-Based Multi-Object Feature Extraction,IEEE Transactions on Circuits and Systems for Video Technology, Vol.23, No.1, pp.30-45 (2013)

- Qingyi Gu, Tadayoshi Aoyama, Takeshi Takaki, and Idaku Ishii : High Frame-rate Tracking of Multiple Color-patterned Objects, Journal of Real-Time Image Processing, doi: 10.1007/s11554-013-0349-y (online first) (2013)

- Qingyi Gu, Takeshi Takaki, and Idaku Ishii : 2000-fps Multi-Object Tracking Based on Color Histogram, Proc. SPIE 8437 (SPIE Photonics Europe / Real-Time Image and Video Processing), 8437E, 2012.

- Qingyi Gu, Takeshi Takaki, and Idaku Ishii : A Fast Multi-Object Extraction Algorithm Based on Cell-Based Connected Components Labeling, IEICE Transactions on Information and Systems, Vol.E95-D, No.2, pp.636-645 (2012)

- Qingyi Gu, Takeshi Takaki, and Idaku Ishii : 2000-fps Multi-Object Recognition Using Shift-Invariant Features, Proc. Int. Symp. Optomechatronic Technologies, 2011.

- Qingyi Gu, Takeshi Takaki, and Idaku Ishii : 2000-fps Multi-Object Extraction Based on Cell-Based Labeling, Proc. IEEE Int. Conf. on Image Processing, pp.3761-3764, 2010.